10 Gbit on the Cheap

Oct 13, 2017

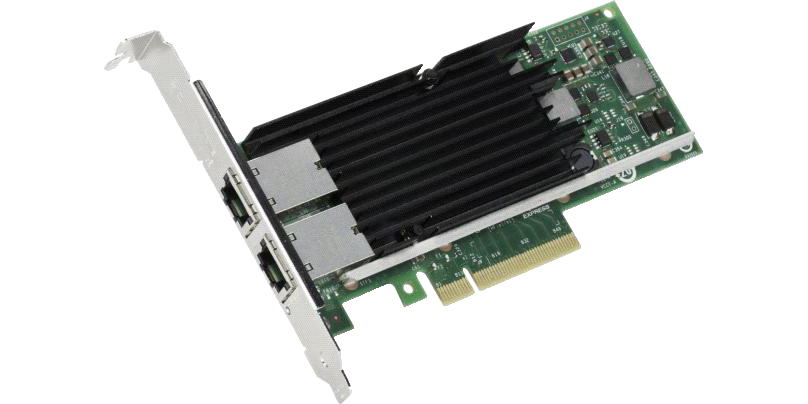

I have been dying to get my hands on some 10 Gbit networking equipment. The problem is I always wanted 10 Gbit Ethernet (not SFP+) which is still very expensive. I go based off the prices of the Intel X540-T1/T2. They are still over $150 on Amazon. If you look on eBay they are cheaper but most likely they are all fakes or use low quality components if they are shipped from China.

Switches for 10 GbE are also very expensive and most have two 10G uplink ports which is kind of useless for me. I would want all 10G with at least 8 ports so when I copy files from machine to machine it’s always fast. I rarely need to access files simultaneously across multiple machines but I do copy large files on all machines.

The alternative is to grab a pair of Mellanox ConnectX-2 cards that are SFP+. You can get two used cards on Amazon for $40.99. I would not be willing to pay more than $20-$30 per card.

You will need one SFP+ cable (Twinax DAC) to connect the machines. These are $17.88 brand new on Amazon. If you want to go cheaper, you can grab one from fs.com. I bought the Ubiquiti branded one which works fine connecting the two Mellanox cards directly. Most likely I will end up with a Ubiquiti 10 Gbit switch at some point and their stuff is picky about DAC cables.

One drawdown to this setup is the longer the DAC cable the more expensive it is. If you have a FreeNAS server in another room then you probably won’t be able to get a DAC cable long enough. You would then need 10 Gbit transceivers and fiber cabling. That’s where 10 GbE is much simpler. But since my servers are literally on top of each other it works perfectly.

As long as you have FreeNAS 9.10+ everything should be plug and play. Just setup a static private IP in FreeNAS and the same on the other end and it all works. Make sure you change the MTU to 9000 otherwise you will not be able to achieve 10G speeds.

I’m able to completely max out 10 Gbit using iperf from FreeNAS to a Debian server. For 10G inside a KVM virtual machine, the simplest thing I could come up with was to create a bridge with the one 10G interface and add that to the qemu configuration. Anything that could benefit from the extra bandwidth (iSCSI / sshfs) connects through the private 10G network between the servers.